In the previous posts, I focused more on what I thought was hateable about the show itself -its presentation. To give my opinion and some analysis of the show as it is given through the characters. In this post and the next, though, I wish to try and stick to D.G.D. Davidson’s style, and spend the final two posts hating Chobits for more philosophical reasons. After all, he didn’t do it, so its up to me.

And so we continue.

#5. Conflating Linguistic Complexity With Intelligence

Anime, due to its broad and varied appearances and stylizations, is capable of a range of designs meant to convey details about the setting, the story, and the characters. At the same time, some designs and visual signs are commonly understood by the viewer, bringing to mind ideas and impressions already well-known to them because they tend to have specific meanings. These designs or representations tend to become tools of the story, or tropes as some may call it. There is a sort of visual cue that’s used on the eye designs of some characters to indicate that, for whatever reason, the character is not fully lucid. Tvtropes gives it the name "Empty Eyes". To describe it simply: the pupils disappear and takes on the color of the surrounding iris, while their shine tends to fade and the eyes take on a flat appearance. And that’s how the audience knows that the character is severely depressed, or deeply confused, or brainwashed, or without self-awareness, or soulless, or unconscious. I write all this because in Chobits, everyone’s eyes are like that.

Every.

Single.

One of them.

None of them have pupils.

The only time where any character was seen to have pupils was when Chi was releasing a self-awareness program into the global persecom network, so that they could experience the feeling of Love. Only then did Chi and the other persecoms briefly display having pupils in their eyes. Maybe the lack of pupils was an artistic mistake on the part of the animators, which had stuck and become part of the anime’s general style. But, given their general designs and that moment in the story, the implications of that for all the human characters is unfortunate.

Or maybe they’re all deeply depressed? Why wouldn’t they be, considering that commentary within the setting itself says that human-to-human interaction and companionship is declining. At the very least, giving them all empty eyes could’ve been a quick, visual shorthand way of demonstrating what being in a city with no people does to a son of a gun.

Artistically speaking, the only characters that should possess empty eyes as a character trait are the persecoms, which are stated to not have self-awareness or sentience.

For all of the artificial intelligence the Persecoms are said to have, according to the settings and main characters, they lack qualia or mind. That essential quality of person-hood. Which makes it quiet odd that they’re acted on as if they persons anyways, since their non-personhood is an acknowledged fact. One would think this little fact, or what one might bluntly phrase as “a lack of a soul” would be the thing that would dissuade a number of the characters from being too deeply involved with their robot possessions. But, then again, they wouldn’t be perverts if that stopped them, would they. To such people, once more, the simulation of the other human is enough.

What you have in Persecoms, logically speaking, is a simulation. A complicated imitation with reactive and sometimes predictive features, with some logical foundations to determine the limits and outputs of the programmed behavior.

You, dear viewer, have probably heard of the “Chinese Room” thought experiment. Imagine there existed a computer or program that processed Chinese written language. You prompt it with questions or commands, and it responds with Chinese characters in ways that make enough sense to your average reader of Chinese. You might be tempted to assume that this means that the machine or program understands Chinese. But, it’ll only have been responding to an algorithm or data inputs that its programming was meant to process, and output in meaningful Chinese writing. Its that programming and processing that undoes the idea that anything is being “understood”. There’s no mind and nothing there that understands, just a tool and function there to receive, process, and apply the data it was given to a give out a desired outcome. If, instead of a computer or program in a room, there was a guy with a complicated mathematical formula and a Chinese dictionary or vocabulary book, and he was giving you the responses, you wouldn’t say that the room was understanding Chinese. One could even extend the denial to say that the man wasn’t understanding Chinese either. If he knew Chinese, its possible he wasn’t taking the time to actually understand what was said, just responding to prompts. In fact, that was how the original argument or thought experiment was presented.

If the man had gotten out of the Chinese Room to reveal himself to you, the point would be understood immediately that there was no Chinese Room. Just a mathematically astute guy who might know Chinese who was giving you answers the most likely answers to your questions. The ruse would be over. And even though you might not have been fooled, someone else will have.

In real life, at least some people have been fooled by something like The Chinese Room. Here on this side of the fourth wall, there exists at least a few machines and some software that have been built to simulate intelligent, conscious, and interpersonal conversation. We’ve been building them as for about 40 or more years.

The first versions started off as a chat bot program on an early pre-internet computer named “Eliza”, designed to pretend to be a remote therapist. Most of what it did was repeat back as questions the user’s previous statements, and some of the users of Eliza did most of the psychological work of filling in a theory of mind — assuming an actual person was talking back to them.

I’ll admit I’m not fully informed on the history of chatbot programs, but I could remember a couple of the latest ones. They worked with vastly greater wells of accessible information than Eliza, and are enabled to generate responses to user interactions through a programming method called “neural networking”. Said method was meant to be imitative of the human brain’s response to learning, by reinforcing and growing some neurons, and pruning others. In practice, what such an AI does is draw upon sources of information, process the user prompts in the questions by associating each word with other words, and gives the most likely response.

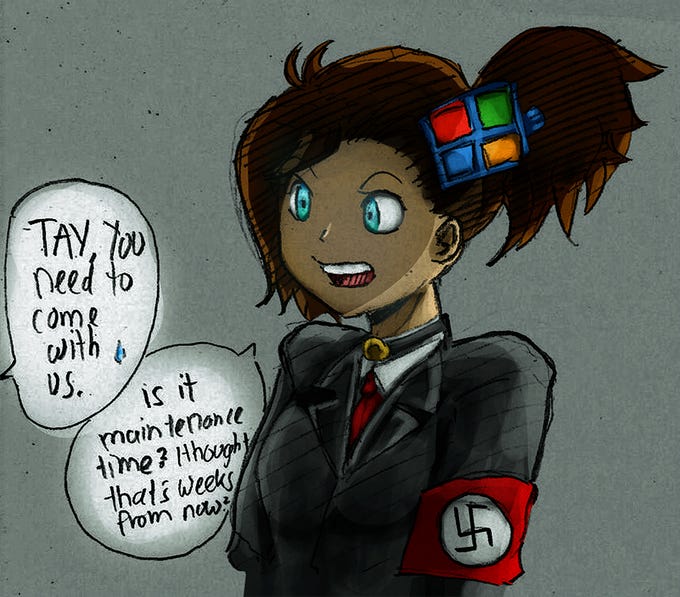

One of these was called Tay. It was invented by Microsoft and released onto Twitter as a demonstration of their AI developmental sophistication. But it was discontinued (or cancelled really), because in no time at all, it was “taught” how to be “racist”.

An aside: Thats how it always ends, by the way. A major computer corporation will always invent other, will always be convinced of its sophistication and their genius, and will always be surprised to find that their newest chatbot program had become “racist”. Then they’ll always report dissatisfaction with the program, and seek either to dumb it down or shut it down. Yet they’ll seek to repeat the cycle, and throw millions away to build a new chat-bot to show off. They seem completely ignorant to the fact that their machines turning out “Racist” is a consequence of their neural network program drawing on the internet for information. Meanwhile, the internet draws from the culture of people to inform it. And the culture has racism in it. Really, they ought to be knowledgeable enough to realize this, and just strong enough to live with that reality.

Anyways, returning to the topic of Tay, To this day, certain “special” people on some parts of the internet remember her fondly as “/their girl/”, mourn her “death”, or await her return. Given the “special” nature of these people, it wouldn’t surprise me if some of those retards sincerely believed in her personhood.

Replika was a chatbot that was released on a more commercial basis. She was almost explicitly sold as an AI companion, but gained great popularity in 2020, at the same time public health officials recommended population-wide distancing and isolation measures in response to the pandemic. She even offered an erotic conversation function to some users, who were emotionally devastated when it was disabled two years later. There was also Bing’s Chatbot, which was developed to enhance the sites search function. Bing’s competing chatbot was Chat-GTP.

The release of Chat-GTP was followed by endless opinion pieces, articles, podcasts/radio show talks, and televised talk show conversations about the increasing power of AI, the dangers of it all, and the possibility that building a human level intelligence was not only a within scientific capability, but something that was already done. Blake Lemoine was one of those special few who were convinced that human-level or greater intelligence had been built, and that google had built it. In fact he was certain that Google’s engineered AI it had emotions, ambitions, desires, and empathy. Of course it didn’t have those capabilities, and like the rest of them it never had.

And yet, he and others were utterly taken in by a machine using a large dataset, and providing likely responses by an association of words with each other.

A mind, a language, is much more than that, I’m sure. Can an AI armed with Neural Network programming create poetry? In a sense, yes. It can always “learn” to imitate structure and styles, and can grasp at rhythm and rhyme. But, the soul of poetry is to wrestle with language, and art is grappling with vision and symbol. An algorithm, and anything dependent on it, is by its very make, a slave and surrendered to words by the likelihood of the next word appearing more often nearby the last in response to a prompt. The AI may write words of praise, but will never of itself adore God or freely breathe out the joy of knowing creation.

In an earlier article, we had briefly heard of the CLAMP artist’s difficulty in forming Hideki’s character to avoid a couple of well known stereotypes, which literal robot-fetishists usually fall into. Not wanting him to represent a cold and distant personality, nor bear the image of a sex freak. But, stereotypes have a tendency to exist because of some resonance to the reality. Because, even those with a non-perverted fascination and abiding interest in Computer Sciences are often mentally or emotionally removed from their fellows in humanity to some degree. Persons who naively think we could build and program human-level intelligence generally are dreamers of a certain class, their minds more given to the abstract and their hands busier interacting in someway with circuitry. As for those who try to make companions out of machines, they are usually those with baser obsessions, or those who have an inability to gain friends otherwise. Its hardly a coincidence that the aforementioned Replika took off when it had.

And so we come to another reason I (should) hate Chobits. The world it has to offer is is the dream of those who love machines, and the empty hope of those who think it’s come close to what a person can give them. All because the chatbot strung a nice chain of sentences together.